On Resolution & Parameterization

Numerical weather modeling splits up the globe into a series of three-dimensional pixels. It applies a ton of math to the data representing each of those pixels to make predictions about the movement, intensity and impact of weather systems. These predictions are generated by specific models developed by groups of people in various weather agencies/administrations around the world, and some are better than others.

The systems that process these tasks are some of the most complex and powerful on the planet, and with good reason. Accurate weather predictions – especially some days out – are extraordinarily difficult to create, as “weather” is one of the most complex things we interact with on a daily basis. Further, billions of dollars and thousands of lives hang in the balance.

Right. So two interesting things worth looking at here: resolution and parameterization.

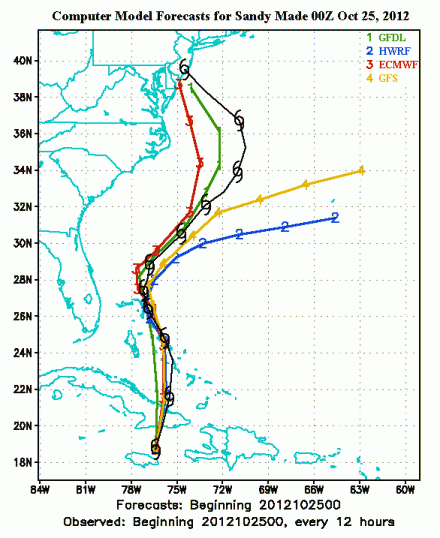

With complex systems, any accurate prediction of future behavior is directly connected to the resolution of the data provided to the predictor(s). Consider the Europe’s forecasting service (the European Centre for Medium-Range Weather Forecasts, or ECMWF) vs. the one used in the United States (the Global Forecasting Service, or GFS): the ECMWF operates with a 16km-wide “pixels” of information, and predicted Hurricane Sandy’s western turn, where the GFS’s 28km-wide pixels did not.

But in all complex systems systems, modelers must accept that there is a level of detail that is unresolvable. And sure, there’s a point where everything is measurable, and it’s computationally possible to use raindrop-level data in an analysis of a weather system, but up to that point weather modelers use parameters as stand-ins for operations that are too small or too numerous to include. This is called “parameterization” and I think it’s awesome. (For what it’s worth, we all do this when we make predictions, but in weather modeling there’s a process for how it works.)

Comments